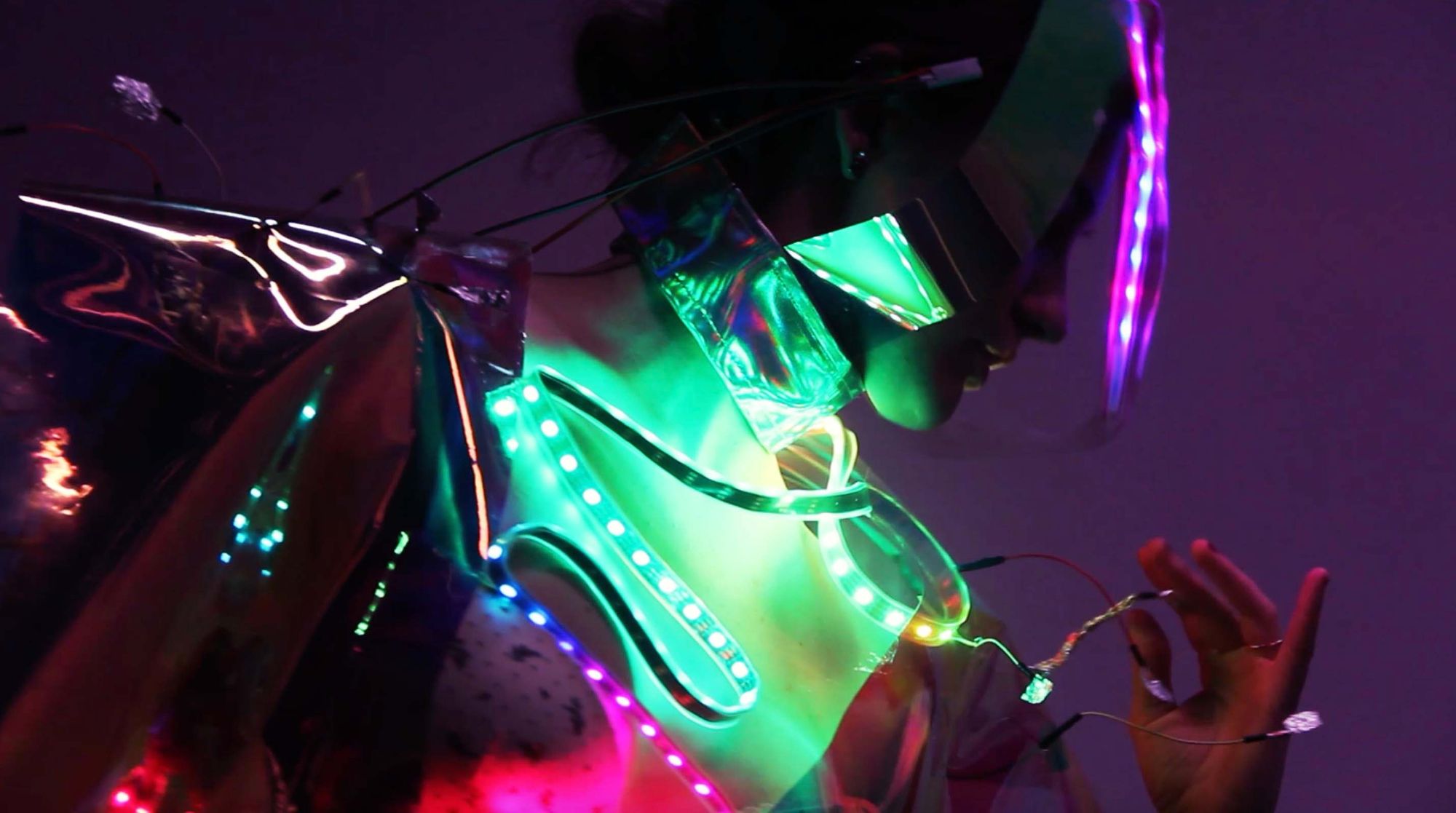

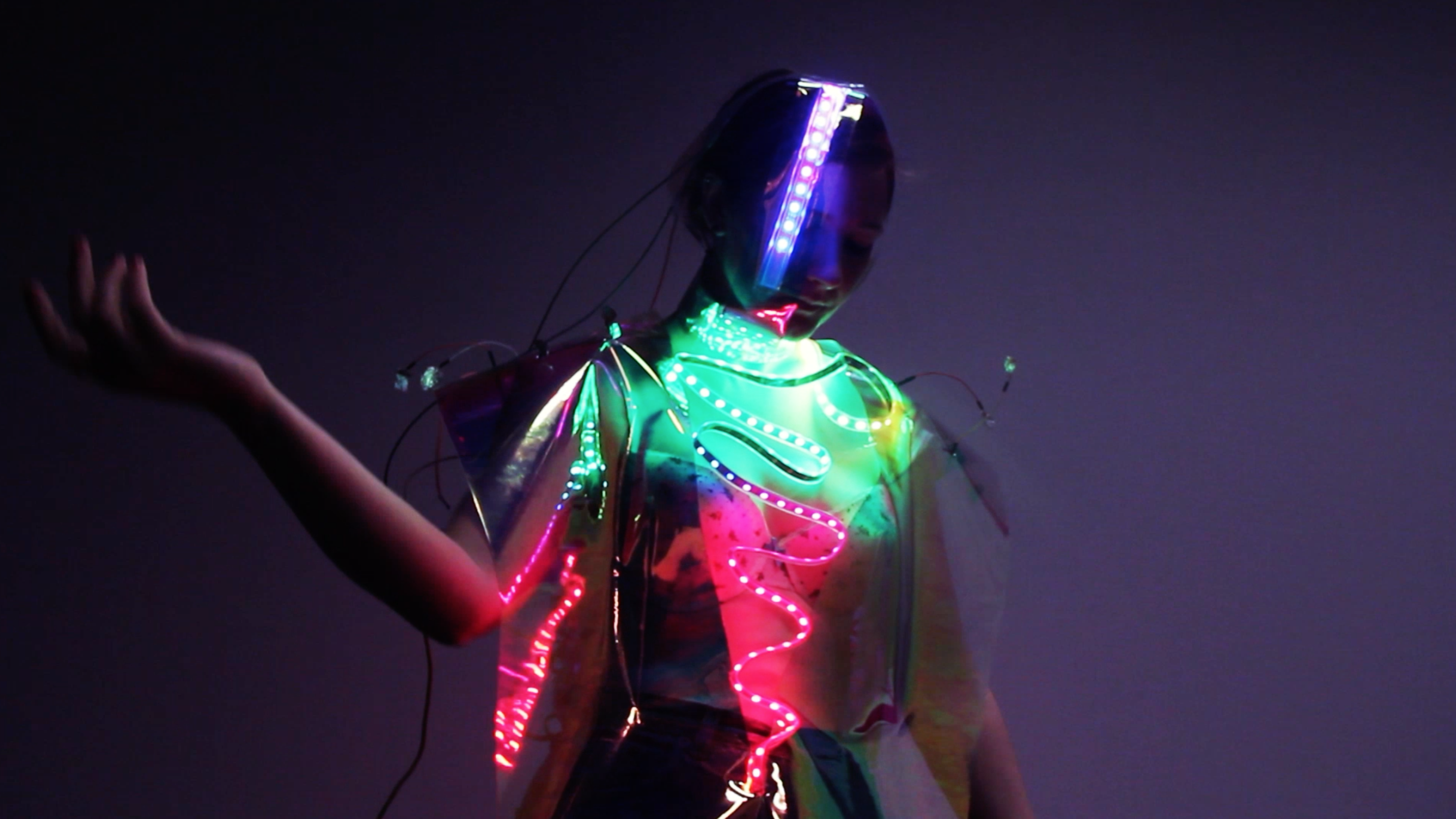

Touch Me is a piece of touch and gesture sensitive clothing that can be worn by the performer but controlled by other individuals responding to their performance.

A dancer's tentacles connect with various pitch from a sound synthesizer and while moving their body the audience is able to respond to the performance by controlling visual lighitng pattern the dancer's clothing via trained mahcine leanring model to recognize different hand gestures. This feedback affects the dancer's performance yet also influences the experience for other members of the audience.

Credit:

Creator: Friendred

Dancer: Amy Louise Cartwright